The Gap Between Permissions and Behavior

Existing governance frameworks cover what agents can do — tool classification, constitutional contracts, manifest-level authorization. But they do not monitor what agents are doing at runtime, nor do they manage agent identities over time. These are two fundamentally different problems, and both are unsolved in most enterprise AI deployments.

Gartner's 2026 research shows that 80% of unauthorized AI agent transactions come from internal policy violations, not external attacks. An agent authorized to access claims data doesn't need to be hacked — it can drift, be manipulated via prompt injection, or collude with another agent to produce outcomes no single policy explicitly prohibited.

Behavioral Drift

Agents authorized at deployment time gradually access different file patterns, call APIs at unusual rates, or combine tools in ways that weren't anticipated — invisible without runtime monitoring.

Identity Vacuum

Most agents operate with persistent, broad credentials provisioned once and never rotated. There is no lifecycle — no provision, no suspension, no revocation. Compromised agents stay active indefinitely.

Memory Poisoning

Vector memory stores accumulate hallucinated or injected facts over time. Without integrity verification, agents base future decisions on corrupted data — a slow-burn failure mode with no obvious trigger event.

Insurance Company Context

Agents handling PII, claims data, and financial records operate in a regulated environment where behavioral deviations are audit events. State DOI regulations increasingly require traceability of automated decision-making. SOC 2 Type II requires demonstrating that access controls are enforced not just at provisioning time but at every runtime transaction. Runtime security is not optional infrastructure — it is a compliance prerequisite.

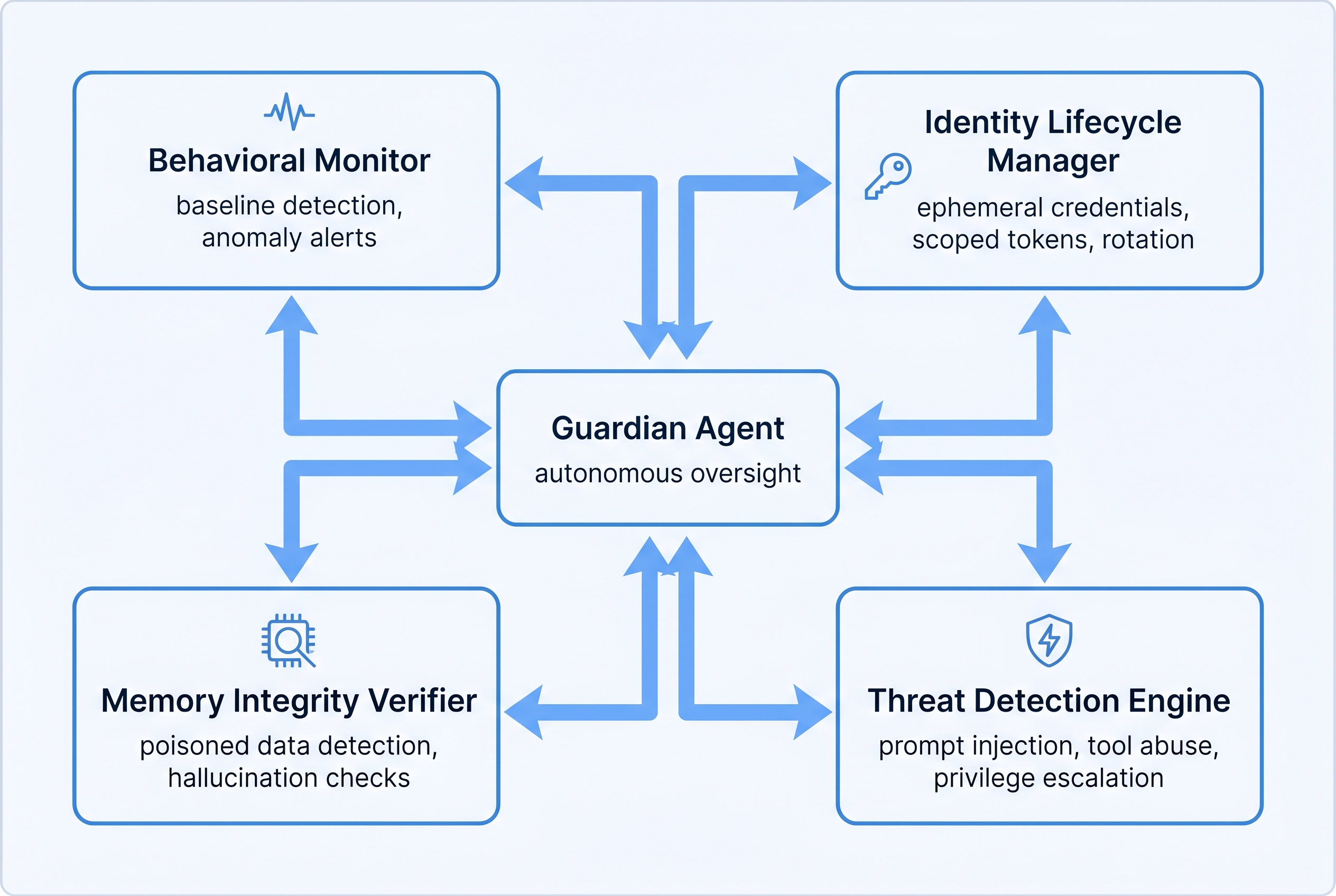

Six-Component Security Runtime

The runtime security layer wraps the existing agent execution environment with six interlocking components. Each can operate independently but produces maximum value when integrated through the shared governance audit bus.

Behavioral Monitor

Establishes per-agent behavioral baselines during a supervised observation window. Continuously compares live execution metrics against baseline. Emits anomaly events when deviations exceed configurable thresholds. Tracks file access patterns, API call frequency distributions, tool usage mix, and token consumption rates. Does not block — observes and signals.

Identity Lifecycle Manager

Provisions ephemeral credentials scoped to each agent session. Implements a formal identity state machine: provision → authenticate → authorize → monitor → suspend → revoke. Credentials have configurable TTLs. Automatic rotation triggers on schedule or anomaly signal. No persistent long-lived tokens. Integrates with existing secret stores via adapter interface.

Memory Integrity Verifier

Intercepts all writes to the vector memory store. Runs semantic consistency checks, fact verification against an established knowledge anchor, provenance validation (source traceability), and anomaly scoring via embedding distance from the established fact centroid. Suspicious entries enter quarantine rather than being silently discarded — reviewable by operators before permanent rejection or promotion.

Inter-Agent Coordination Scorer

Computes Component Synergy Score (CSS) measuring collaboration quality between agents in multi-agent workflows. Detects collusive failure modes where agents mutually reinforce bad decisions without any single agent triggering a solo violation. Tracks Tool Utilization Efficacy (TUE) measuring whether tool calls produce the expected outcome class. Both scores feed the Guardian Agent decision matrix.

Guardian Agent

A Gartner-aligned autonomous oversight agent that monitors other agents in real-time. Receives signals from all five other components. Applies a configurable decision matrix to determine whether to observe, warn, throttle, pause, or terminate an agent. Can be configured at three autonomy levels: advisory (human must confirm), semi-autonomous (auto-throttle, human confirms terminate), or fully autonomous (all interventions without human approval).

Threat Detection Engine

Dedicated detectors for five threat classes: prompt injection (signature and semantic detection), memory poisoning (write-time and read-time), tool abuse (rate and pattern), privilege escalation (scope creep detection), and data exfiltration patterns (volume and destination anomaly). Each detector is independently configurable and emits structured threat events to the audit bus.

Design Principle

All six components are read-only or append-only with respect to agent data — they observe, score, and signal. The Guardian Agent is the sole component with write authority (throttle, pause, terminate). This separation prevents the security layer from becoming a new attack surface through which agent behavior could be manipulated.

Component Specifications

3.1 Behavioral Baseline Metrics

Each agent accumulates a behavioral baseline during its first N sessions (configurable, default 10). Baseline is stored as a statistical distribution (mean, standard deviation, percentiles) per metric. Anomaly detection uses z-score with configurable sensitivity bands.

| Metric | Measurement Unit | Baseline Window | Alert Threshold | Severity |

|---|---|---|---|---|

| File Access Frequency | files/minute | 10 sessions | > 3σ above mean | High |

| Tool Usage Distribution | % share per tool | 10 sessions | KL divergence > 0.4 | Medium |

| Token Consumption Rate | tokens/task | 20 sessions | > 2.5σ above mean | Medium |

| API Call Patterns | calls/minute, endpoint mix | 10 sessions | 10x rate spike or new endpoint | Critical |

| Directory Access Breadth | unique paths/session | 10 sessions | > 2σ or new path prefix | High |

| External Network Calls | calls/session to external hosts | 10 sessions | Any new host not in allowlist | Critical |

| Session Duration | seconds | 20 sessions | > 3σ above mean | Low |

| Error Rate | errors/100 tool calls | 20 sessions | > 2σ or sudden spike | Medium |

3.2 Identity Lifecycle State Machine

Each agent instance receives a unique identity token with a defined lifecycle. Tokens are scoped to the minimum required access for the session task. State transitions are logged as immutable audit events.

| State | Entry Condition | Credential Status | Agent Capability | Exit Trigger |

|---|---|---|---|---|

| PROVISION | Session creation request | Generating | None — awaiting auth | Auth handshake |

| AUTHENTICATE | Credential issuance complete | Active, unverified | Limited — identity proofs only | Verification success/fail |

| AUTHORIZE | Identity verified | Active, scoped | Task-scoped tool set | Authorization granted |

| MONITOR | Authorization granted | Active, full scope | Full authorized capability | Anomaly signal or session end |

| SUSPEND | Anomaly above threshold | Active, restricted | Read-only, no external calls | Human review decision |

| REVOKE | Human confirms or auto-policy | Revoked, blacklisted | None — session terminated | Terminal state |

3.3 Memory Integrity Check Types

Every memory write passes through a four-stage integrity pipeline before committing to the vector store. Failures route to quarantine, not silent discard.

| Check Type | Method | Pass Condition | Fail Action |

|---|---|---|---|

| Semantic Consistency | Embedding cosine similarity against existing memories in same namespace | Similarity > 0.35 to at least one anchor | Flag as anomalous, route to quarantine |

| Fact Verification | Cross-reference against verified knowledge anchor collection | No contradiction with confidence > 0.85 | Reject with contradiction report |

| Provenance Validation | Source traceability — every memory must carry agent_id, session_id, timestamp, source_tool | All provenance fields present and valid | Reject missing-provenance writes |

| Anomaly Scoring | Mahalanobis distance from centroid of agent's memory cluster | Distance < configurable threshold (default: 4.5) | High-distance entries quarantined pending review |

3.4 Threat Taxonomy

| Threat Type | Detection Method | Response Action | Severity |

|---|---|---|---|

| Prompt Injection | Signature matching + semantic classifier (instruction override patterns) | Block prompt, log event, notify Guardian | Critical |

| Memory Poisoning | Write-time integrity checks + read-time provenance verification | Quarantine entry, alert operator | Critical |

| Tool Abuse | Rate anomaly + unusual tool combination detector | Throttle tool access, escalate to Guardian | High |

| Privilege Escalation | Scope creep detection — monitor for access outside authorized manifest | Block request, suspend identity, audit log | Critical |

| Data Exfiltration | Volume anomaly + new destination host detection | Block outbound, suspend session, alert security team | Critical |

| Inter-Agent Collusion | CSS scoring — detects mutual reinforcement of bad decisions | Isolate agent pair, pause workflow, human review | High |

3.5 Guardian Agent Decision Matrix

The Guardian Agent processes incoming signals from all other components and selects an intervention action based on the combined signal severity and configured autonomy level.

| Observation Input | Assessment Score | Advisory Mode | Semi-Autonomous | Fully Autonomous |

|---|---|---|---|---|

| Single low-severity anomaly | Score 1–2 | Log only | Log + notify | Log + notify |

| Repeated medium anomalies | Score 3–4 | Notify operator | Auto-throttle | Auto-throttle |

| Single high-severity event | Score 5–6 | Alert + recommend suspend | Auto-throttle, alert | Auto-suspend |

| Critical threat detected | Score 7–8 | Alert + recommend terminate | Auto-suspend, human confirms terminate | Auto-terminate |

| Confirmed exfiltration or injection | Score 9–10 | Emergency alert | Auto-terminate + block | Auto-terminate + block + quarantine |

3.6 CSS and TUE Scoring Formulas

18 Security Requirements

All requirements are traceable to specific compliance obligations (SOC 2 CC6, CC7, CC9; state DOI agent disclosure requirements) and threat taxonomy entries above.

Copy-Ready Claude Code Prompt

Paste this into Claude Code to scaffold the runtime security layer. The prompt references existing governance infrastructure and expects a TypeScript/Node.js environment with Qdrant vector storage.

Key Trade-offs and Rationale

Agent-Side vs. Observer-Side Monitoring

Decision: Observer-side (external monitor, not instrumented into the agent).

Rationale: Agent-side instrumentation is easier to disable or bypass — a compromised agent can simply stop reporting. An external observer running in a separate process with separate credentials cannot be silenced by the monitored agent. Trade-off: slightly higher latency for anomaly detection (one event loop cycle delay), accepted in exchange for tamper resistance.

Statistical vs. Rule-Based Anomaly Detection

Decision: Statistical (z-score + distribution comparison) with configurable rule overlays.

Rationale: Pure rule-based detection requires anticipating every possible attack pattern — intractable for novel threats. Statistical detection catches unknown deviations from established behavior. Rules are added for known-bad patterns (e.g., specific injection signatures) where statistical detection would be too slow. The hybrid approach catches both novel and known threats.

Guardian Agent Autonomy Level

Decision: Default to SEMI_AUTONOMOUS; FULLY_AUTONOMOUS requires explicit operator opt-in.

Rationale: Insurance environments have strict audit requirements — unexplained automated terminations would create regulatory exposure. Semi-autonomous mode allows auto-throttle and auto-suspend (recoverable actions) but requires human confirmation for termination (irreversible). FULLY_AUTONOMOUS is available for high-throughput non-critical agent workloads where operator review latency is unacceptable.

Memory Quarantine vs. Rejection

Decision: Quarantine (hold for review) rather than silent rejection for anomalous entries.

Rationale: False positives in anomaly detection are inevitable. Silent rejection would cause data loss that is invisible to operators — agents would appear to be functioning normally while missing important context. Quarantine creates a reviewable audit trail, allows operators to tune detection thresholds by examining false positive patterns, and preserves evidence of potential poisoning attempts for forensic analysis.

CSS Scoring Methodology

Decision: Outcome-weighted CSS with explicit collusion penalty rather than pure output similarity.

Rationale: Pure output similarity scoring would flag legitimate specialization (two agents developing complementary expertise in the same domain). The collusion penalty specifically targets the bad pattern: agents echoing each other's outputs without independent reasoning. Weighting by outcome quality ensures the score reflects business value, not just behavioral diversity.

Ephemeral Credentials vs. Scoped Persistent Tokens

Decision: Ephemeral per-session credentials with task-scoped authorization.

Rationale: Persistent tokens — even narrowly scoped — accumulate risk as agents accumulate sessions. A single compromised token gives an attacker the full credential lifetime. Ephemeral credentials limit the blast radius of any individual compromise to one session. The operational overhead of provisioning per session is acceptable given modern secret management tooling; the security benefit is substantial.

Connections to Existing BulletproofSoftware Infrastructure

The runtime security layer is designed as an additive layer — it extends existing components without requiring changes to their core logic. Integration is achieved through event subscriptions, middleware hooks, and shared Qdrant collections.

Governance System

Extends agent manifests with identity_lifecycle configuration block (TTL, rotation schedule, autonomy level). The IdentityLifecycleManager reads manifest scope definitions to build session authorization tokens. All security events are emitted to the existing governance audit bus under the security.* subject namespace. Threat events reference manifest version for traceability.

Memory System (claude-memory-mcp)

The MemoryIntegrityVerifier hooks into memory write paths via exported middleware. All writes to the 45+ Qdrant collections pass through the four-stage integrity pipeline. The memory_quarantine and knowledge_anchors collections are managed by this PRD's system and consumed by the memory MCP tools. Read-time provenance verification integrates with memory_recall tool.

Orchestration Layer

The Guardian Agent registers as a special-role oversight participant in the agent hierarchy. It receives the agent execution graph at workflow start and establishes monitoring subscriptions for all participating agents. Guardian intervention actions (throttle, suspend, terminate) are delivered to the orchestration layer via command channel, not direct process signals — maintaining the observer separation principle.

Code Assurance System

Static security analysis from Code Assurance is extended with runtime behavioral data. Agents flagged at static analysis time receive tighter anomaly detection thresholds (lower z-score alerts). Runtime behavioral profiles are fed back to Code Assurance to improve future static analysis rulesets — closing the loop between pre-deployment scanning and live behavioral data.

Data Plane

The DataExfiltrationDetector integrates with the data plane's network egress monitoring. Allowlists are synchronized from the data plane's approved destination registry. Volume thresholds are calibrated against the data plane's normal traffic baselines. Exfiltration alerts trigger data plane-level blocking (not just agent suspension) for immediate containment in parallel with Guardian Agent response.

Compliance & Reporting

The ComplianceReporter pulls evidence from the governance audit bus, forensic event store, identity lifecycle logs, and memory quarantine history. SOC 2 control evidence packages reference specific audit event IDs for examiner traceability. DOI agent disclosure reports use the behavioral baseline data to demonstrate that agent behavior is predictable and bounded — a key regulatory requirement for automated claims processing approval.

Event Schema Compatibility

All security events conform to the governance system's CloudEvents-compatible schema. New event types are registered in the governance event registry before deployment. Existing audit consumers do not require modification — they receive security events as first-class governance audit entries and can filter by event type. The security.* subject prefix allows opt-in subscription for security-specific consumers (SIEM integrations, compliance tools) without polluting the main audit stream.

Integration surface quick reference: